just closing the process running on that local port. A typical output would be:

System.IO.IOException: Failed to bind to address http://127.0.0.1:5000: address already in use.

We can locate which process is using te port, that is, the local port, and then terminate the process. This will free up the local port so we can use it.

Here is a Powershell function that will find the process running at a local port, if any, given by a specied port number.

<#

.SYNOPSIS

Stops the process using the specified local port

.DESCRIPTION

This function finds the process, if any, using the specified port and stops it.

In case there are no process, the function exits.

.PARAMETER portId

The port number to check for the process

.RETURNS int

If successful, returns 0. If not, returns -1.

.EXAMPLE

Terminate-Port -portId 5000

#>

function Terminate-Port {

param (

[Parameter(Mandatory = $true)]

[int]$portId

)

# Get the process ID (PID) using the specified port

try {

Write-Host "Looking for processing running using local port: $portId"

$connection = Get-NetTCPConnection -LocalPort $portId -ErrorAction Stop

if ($connection) {

Write-Output "Port $portId is in use by process ID $($connection.OwningProcess | Select-Object -Unique)."

} else {

Write-Output "Port $portId is not in use."

return 0 | Out-Null

}

} catch {

Write-Output "An error occurred: $_"

return -1 | Out-Null

}

$processId = $connection.OwningProcess

if ($processId -and $processId -gt 0) {

$process = Get-Process -Id $processId

if ($process) {

# Stop the process

try {

Stop-Process -Id $processId -Force

Write-Host "Process running at port $portId was terminated."

return 0 | Out-Null

} catch {

Write-Host "Could not stop process. Reason: $error"

}

}

}

Write-Host "No process found using port $portId."

return -1 | Out-Null

}

To terminate , we just run the command

Terminate-Port -portId 5000

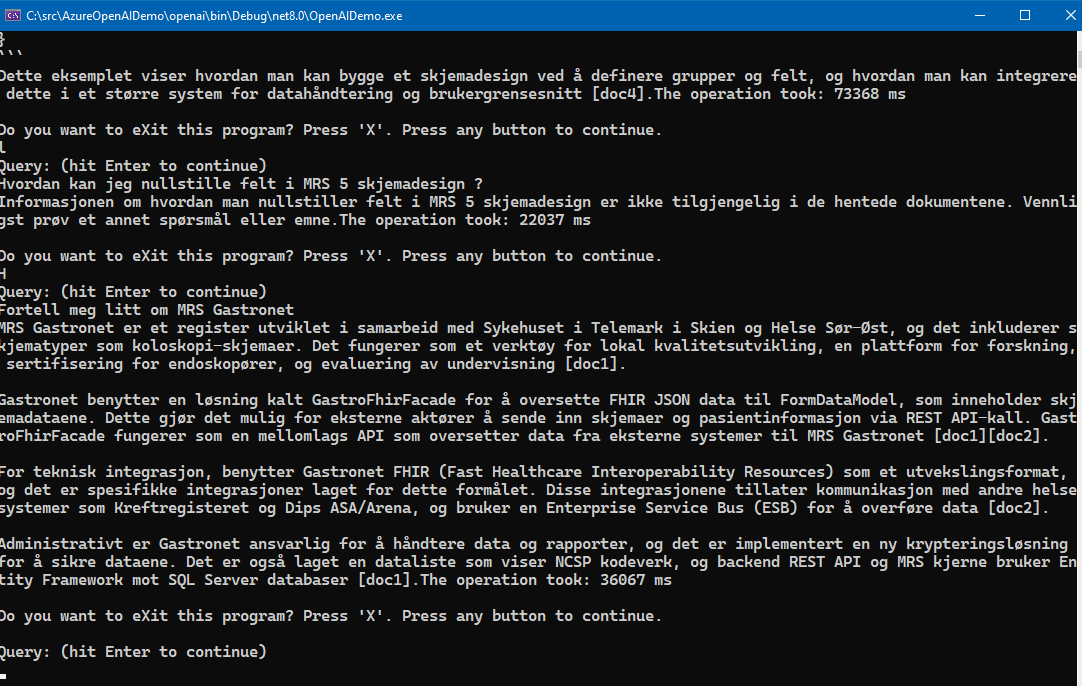

Screenshots of the function being run:

BEFORE the command Terminate-Port is run and AFTER. Note that the process running at the given portId was terminated and then the local port is freed up again.

AFTER

Please note that the Free tier of Azure AI Search is rather slow and seems to only allow queryes at a certain interval, it will suffice to just test it out. To really test it out in for example an Intranet scenario, the standard tier Azure AI search service is recommended, at about 250 USD per month as noted.

Please note that the Free tier of Azure AI Search is rather slow and seems to only allow queryes at a certain interval, it will suffice to just test it out. To really test it out in for example an Intranet scenario, the standard tier Azure AI search service is recommended, at about 250 USD per month as noted.